Music Mixing Techniques | Vibepedia

Music mixing is the critical process of combining individual multitrack recordings into a final, polished audio product. It involves meticulously adjusting…

Contents

- 🎵 Origins & History

- ⚙️ How It Works

- 📊 Key Facts & Numbers

- 👥 Key People & Organizations

- 🌍 Cultural Impact & Influence

- ⚡ Current State & Latest Developments

- 🤔 Controversies & Debates

- 🔮 Future Outlook & Predictions

- 💡 Practical Applications

- 📚 Related Topics & Deeper Reading

- Frequently Asked Questions

- References

- Related Topics

Overview

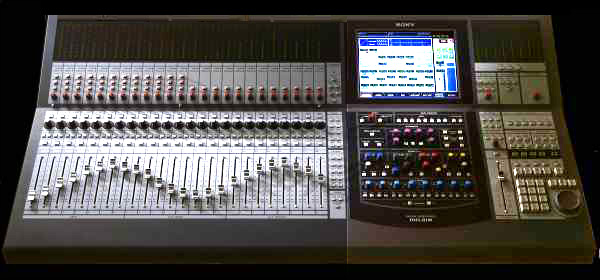

The roots of music mixing stretch back to the early days of [[recording-technology|recording technology]] in the late 19th and early 20th centuries. Initially, recording was a live, single-take affair, often capturing multiple instruments simultaneously onto a single [[wax-cylinder-recording|wax cylinder]] or [[gramophone-record|gramophone record]]. The concept of 'mixing' as we know it emerged with the advent of [[multitrack-recording|multitrack recording]] in the 1940s and 50s, pioneered by studios like [[abbey-road-studios|Abbey Road Studios]] with artists like [[les-paul|Les Paul]] experimenting with layering sounds. Early mixing involved physically re-recording tracks onto new tapes, a laborious process that nonetheless allowed for unprecedented creative control. The development of the [[mixing-console|mixing console]] by companies like [[neve-electronics|Neve]] and [[api-audio|API]] in the 1960s and 70s further democratized the process, offering dedicated faders, EQs, and auxiliary sends for each track, laying the groundwork for the complex mixes of rock, funk, and disco.

⚙️ How It Works

At its core, music mixing is about balancing and enhancing individual sonic elements within a song. A [[mixing-engineer|mixing engineer]] typically receives multitrack session files, each containing a single instrument or vocal. The first step is often gain staging, ensuring optimal signal levels to avoid distortion and noise. Then comes equalization (EQ) to shape the tonal character of each sound, cutting unwanted frequencies and boosting desirable ones. Compression is applied to control dynamic range, making quiet parts louder and loud parts quieter for consistency and impact. Panning places sounds in the stereo field, creating width and separation. Effects like [[reverb|reverb]] and [[delay|delay]] add space and depth, while [[automation|automation]] allows for dynamic changes in levels, panning, and effects over time, bringing the mix to life.

📊 Key Facts & Numbers

The global market for audio mixing and mastering services is estimated to be worth billions of dollars annually, with projections indicating continued growth. A single professional mixing session can cost anywhere from $100 to $1,000 per song, depending on the engineer's experience and the project's scope. Modern digital audio workstations (DAWs) like [[pro-tools|Pro Tools]] and [[ableton-live|Ableton Live]] can handle hundreds of tracks simultaneously, a far cry from the 2-track limitations of early stereo recordings. The average [[pop-music|pop song]] today often features 50-100 individual tracks, with some electronic music productions exceeding 200. Mastering, the final stage after mixing, can increase a track's perceived loudness by up to 6 dB, a significant jump in energy.

👥 Key People & Organizations

Legendary mixing engineers like [[geoff-tucker|Geoff Emerick]], known for his work with [[the-beatles|The Beatles]], and [[bob-clearmountain|Bob Clearmountain]], who has mixed for [[david-bowie|David Bowie]] and [[rolling-stones|The Rolling Stones]], set early benchmarks. Contemporary titans include [[serban-ghinea|Serban Ghenea]], whose credits boast an astonishing number of [[billboard-hot-100|Billboard Hot 100]] chart-toppers for artists like [[taylor-swift|Taylor Swift]] and [[the-weeknd|The Weeknd]]. Major studios like [[electric-lady-studios|Electric Lady Studios]] in New York and [[sunset-sound-recorders|Sunset Sound Recorders]] in Los Angeles have been crucibles for innovation. Software developers like [[avid-technology|Avid]] (Pro Tools) and [[ableton-ag|Ableton]] (Ableton Live) are also key players, shaping the tools engineers use daily.

🌍 Cultural Impact & Influence

Music mixing techniques profoundly shape listener perception and the emotional impact of a song. The 'loudness war' of the late 1990s and early 2000s, driven by aggressive compression, led to mixes that were sonically fatiguing but perceived as 'louder' on radio. This era, exemplified by albums like [[metallica-load|Metallica's 'Load']], sparked significant debate about artistic intent versus commercial pressure. Conversely, techniques like [[mid-side-eq|mid-side EQ]] allow for precise control over the stereo image, creating wider, more immersive experiences. The rise of [[dolby-atmos|Dolby Atmos]] mixing for spatial audio is further pushing boundaries, enabling artists and engineers to create 3D soundscapes that envelop the listener, moving beyond traditional stereo.

⚡ Current State & Latest Developments

The current landscape of music mixing is dominated by digital workflows and the increasing accessibility of powerful DAWs. Remote mixing, facilitated by cloud-based collaboration platforms like [[splice-com|Splice]] and [[audiomovers|Audio Movers]], allows engineers to work with artists and producers globally. AI-powered mixing assistants, such as [[izotope-ozone|iZotope's Ozone]] and [[landr-com|LANDR]], are emerging, offering automated suggestions for EQ, compression, and mastering. While these tools democratize the process, they also spark debate about the role of human artistry versus algorithmic efficiency. The demand for high-resolution audio and immersive formats like [[dolby-atmos|Dolby Atmos]] continues to grow, pushing engineers to adapt their techniques for new playback systems.

🤔 Controversies & Debates

One of the most enduring controversies in mixing revolves around the 'loudness war.' Critics argue that excessive dynamic range compression sacrifices musicality and emotional nuance for sheer volume, leading to listener fatigue. This debate intensified in the early 2000s, with many artists and engineers advocating for more dynamic mixes. Another point of contention is the increasing reliance on AI and automated mixing tools. While proponents hail them as democratizing forces, skeptics worry they devalue the craft and unique sonic signatures developed by experienced engineers. The debate over the 'correct' way to mix for immersive audio formats like [[dolby-atmos|Dolby Atmos]] is also ongoing, with different engineers and artists exploring varied approaches to spatialization.

🔮 Future Outlook & Predictions

The future of music mixing will likely see a deeper integration of [[artificial-intelligence|artificial intelligence]] and machine learning. AI could become an indispensable co-pilot for engineers, handling repetitive tasks, suggesting creative options, and even predicting listener preferences. The expansion of spatial audio formats like [[dolby-atmos|Dolby Atmos]] and [[sony-360-reality-audio|Sony 360 Reality Audio]] will continue to drive innovation in immersive mixing techniques, potentially leading to entirely new sonic paradigms. Furthermore, as [[virtual-reality|virtual reality]] and [[augmented-reality|augmented reality]] technologies mature, we may see the emergence of 'virtual studios' where engineers can collaborate and mix in shared, interactive 3D spaces, blurring the lines between the physical and digital realms.

💡 Practical Applications

Music mixing techniques are fundamental to virtually any recorded audio production. In [[film-scoring|film scoring]], precise mixing ensures dialogue clarity, enhances dramatic tension with sound effects, and integrates the musical score seamlessly. For [[video-game-audio|video game audio]], mixing creates immersive soundscapes that respond dynamically to player actions. Podcasters and audiobook narrators rely on mixing to ensure consistent vocal levels and a professional, engaging listening experience. Even in live sound reinforcement, many of the same principles of EQ, compression, and level balancing are applied to optimize the sound for a specific venue and audience, albeit in real-time.

Key Facts

- Year

- 1940s-Present

- Origin

- United States / United Kingdom

- Category

- aesthetics

- Type

- concept

Frequently Asked Questions

What is the primary goal of music mixing?

The primary goal of music mixing is to optimize and combine individual multitrack recordings into a final, cohesive audio product. This involves balancing the relative levels of each track, shaping their tonal characteristics with equalization, controlling their dynamic range with compression, and placing them within the stereo or surround sound field to create a clear, impactful, and emotionally resonant listening experience. Ultimately, it's about making all the individual elements work together harmoniously to serve the song's artistic vision.

How does genre influence mixing techniques?

Genre significantly dictates mixing techniques because different musical styles have distinct sonic priorities. For instance, [[hip-hop-music|hip-hop]] mixes often emphasize a powerful, punchy low-end and clear vocal presence, utilizing heavy compression and prominent basslines. [[classical-music|Classical music]] mixing, conversely, prioritizes capturing the natural acoustics of an ensemble and maintaining a wide dynamic range, often with minimal processing. [[rock-music|Rock]] mixes might focus on the energy of the guitars and drums, while [[electronic-dance-music|EDM]] frequently employs aggressive automation, sidechain compression, and wide stereo imaging to create a driving, energetic feel.

What are the key differences between mixing and mastering?

Mixing and mastering are distinct but sequential stages in audio production. Mixing is the process of blending individual multitrack recordings into a stereo or surround sound file, focusing on the balance, tone, and spatial placement of each instrument and vocal. Mastering, on the other hand, is the final step where a finished stereo mix is prepared for distribution. A mastering engineer optimizes the overall loudness, tonal balance, and consistency across multiple tracks on an album, ensuring it translates well across various playback systems and meets industry standards, often applying subtle EQ, compression, and limiting.

Can AI truly replace human mixing engineers?

While AI tools are rapidly advancing and can automate many technical aspects of mixing, such as level balancing and basic EQ adjustments, they currently lack the nuanced artistic judgment and creative intuition of experienced human engineers. AI can provide a solid starting point or assist with tedious tasks, but the ability to interpret an artist's emotional intent, make subjective creative decisions, and develop a unique sonic signature remains a human domain. The debate continues, but most experts believe AI will serve as a powerful assistant rather than a complete replacement for human creativity in mixing.

What is 'panning' in audio mixing?

Panning refers to the distribution of an audio signal across the stereo or surround sound field. In a stereo mix, panning controls determine how much of a sound is heard in the left speaker versus the right speaker. For example, a lead vocal is typically panned center, while background vocals or instruments might be panned hard left or right to create width and separation. In surround sound, panning extends this concept to include front, rear, and height channels, allowing for a more immersive, three-dimensional soundscape. Effective panning is crucial for creating a sense of space, clarity, and stereo width in a mix.

How can I start learning music mixing techniques?

To begin learning music mixing, start by acquiring a [[digital-audio-workstation|Digital Audio Workstation (DAW)]] like [[ableton-live|Ableton Live]], [[logic-pro|Logic Pro]], or [[pro-tools|Pro Tools]]. Familiarize yourself with its interface and basic functions. Then, focus on understanding core concepts: gain staging, [[equalization|EQ]], [[compression|compression]], [[panning|panning]], and [[reverb|reverb]]. Practice by mixing pre-made multitrack sessions available online from sources like [[cambridge-mt|Cambridge Music Technology]] or [[indi-one-stop-shop|Indie Music Academy]]. Listen critically to professionally mixed songs in genres you admire, analyzing how different elements are treated. Consider online courses or tutorials from reputable sources like [[mix-with-the-masters|Mix With The Masters]] or [[puremix-net|PureMix]].

What is the significance of dynamic range in mixing?

Dynamic range is the difference between the loudest and quietest parts of an audio signal. In mixing, managing dynamic range is crucial for creating impact, clarity, and emotional expression. A wide dynamic range allows for subtle nuances and dramatic shifts, making music feel more alive and engaging. However, excessive quiet passages can get lost, and extremely loud passages can be fatiguing. Compression is often used to control dynamic range, making the overall level more consistent and perceived as louder, but over-compression can crush the life out of a mix, a phenomenon central to the 'loudness war' debate. Balancing dynamics is key to a professional-sounding mix.